Apple says this process is more privacy mindful than scanning files in the cloud as NeuralHash only searches for known and not new child abuse imagery. It’s at that point Apple can decrypt the matching images, manually verify the contents, disable a user’s account and report the imagery to NCMEC, which is then passed to law enforcement. Apple would not say what that threshold was, but said - for example - that if a secret is split into a thousand pieces and the threshold is ten images of child abuse content, the secret can be reconstructed from any of those ten images. Apple uses another cryptographic principle called threshold secret sharing that allows it only to decrypt the contents if a user crosses a threshold of known child abuse imagery in their iCloud Photos. The results are uploaded to Apple but cannot be read on their own. NeuralHash uses a cryptographic technique called private set intersection to detect a hash match without revealing what the image is or alerting the user.

Apple’s latest accessibility features are for those with limb and vocal differencesīefore an image is uploaded to iCloud Photos, those hashes are matched on the device against a database of known hashes of child abuse imagery, provided by child protection organizations like the National Center for Missing & Exploited Children (NCMEC) and others.Apple unveils new iOS 15 privacy features at WWDC.New Apple technology will warn parents and children about sexually explicit photos in Messages.Apple says NeuralHash tries to ensure that identical and visually similar images - such as cropped or edited images - result in the same hash. Any time you modify an image slightly, it changes the hash and can prevent matching. NeuralHash will land in iOS 15 and macOS Monterey, slated to be released in the next month or two, and works by converting the photos on a user’s iPhone or Mac into a unique string of letters and numbers, known as a hash. The news was met with some resistance from some security experts and privacy advocates, but also users who are accustomed to Apple’s approach to security and privacy that most other companies don’t have.Īpple is trying to calm fears by baking in privacy through multiple layers of encryption, fashioned in a way that requires multiple steps before it ever makes it into the hands of Apple’s final manual review. /article-new/2018/08/how-to-share-icloud-files-ios-800x573.jpg)

News of Apple’s effort leaked Wednesday when Matthew Green, a cryptography professor at Johns Hopkins University, revealed the existence of the new technology in a series of tweets.

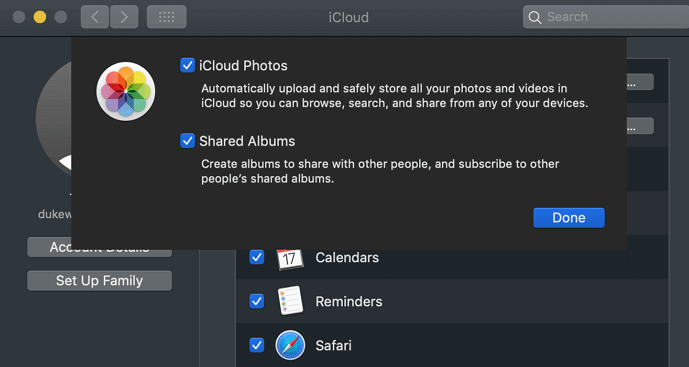

But Apple has long resisted scanning users’ files in the cloud by giving users the option to encrypt their data before it ever reaches Apple’s iCloud servers.Īpple said its new CSAM detection technology - NeuralHash - instead works on a user’s device, and can identify if a user uploads known child abuse imagery to iCloud without decrypting the images until a threshold is met and a sequence of checks to verify the content are cleared. Most cloud services - Dropbox, Google, and Microsoft to name a few - already scan user files for content that might violate their terms of service or be potentially illegal, like CSAM. Another feature will intervene when a user tries to search for CSAM-related terms through Siri and Search. Later this year, Apple will roll out a technology that will allow the company to detect and report known child sexual abuse material to law enforcement in a way it says will preserve user privacy.Īpple told TechCrunch that the detection of child sexual abuse material (CSAM) is one of several new features aimed at better protecting the children who use its services from online harm, including filters to block potentially sexually explicit photos sent and received through a child’s iMessage account.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed